The beginnings of generative artificial intelligence (GenAI), led by Chat Generative Pre-Trained Transformer (ChatGPT), not only change the behaviour of digital media ecosystem users but also increase the energy consumption of enterprises working with GenAI, which presents them with a fundamental challenge in the era of climate change. This study aims to examine the relationships between the selected aspects of the use of GenAI tools and the environmental perception and behaviour of their users to understand the population's current environmental attitudes towards environmental risks and environmental sustainability. The survey was conducted in October 2024 on a sample of 1,268 respondents of the Czech Republic population. To process the data set, a logistic regression analysis, chi-squared test, Akaike information criterion, and Bayesian information criterion are employed. The results show that the more often people use GenAI tools, the more distant they consider the effects of climate change in time. The low frequency of use of ChatGPT may influence a higher willingness to change popular GenAI tools that are not maintained by environmentally friendly data centres. The frequency of ChatGPT use influences individuals’ perception of the importance of climate-change solving. The more frequently the respondents use artificial intelligence (AI) systems, they less perceive climate change as important. The low frequency of ChatGPT usage is associated with lower willingness to change email provider, transfer own data, leave social networks, stop using a favourite streaming platform and stop using a favourite GenAI platform. The respondents’ attitudes show a visible behavioural change. Internal personal motivation and self-confidence in learning, interest in career and self-confidence when using AI, the behavioural aspects, and the cognitive aspects are altered considerably. Based on the outcomes of the population survey, the study concludes that the issue of environmental friendliness of AI tools should become part of AI literacy that could strengthen population's willingness to use more energy-efficient GenAI platforms. The listed challenges are important in the perspective of the latest technological development, as shown by the discussion on the energy and computational demands of the GenAI platform DeepSeek, which is also discussed in the study.

Artificial intelligence (AI), as a high technology, can transform not only many industries but also the way society functions. According to estimation, global spending on AI technologies across all industries in 2023 was 154 billion USD. Within all the observed industries, the majority of spending was in the banking sector – 20.6 billion USD (Alzoubi and Mishra, 2024). Many AI technologies can significantly reduce greenhouse gas emissions in almost all industrial sectors. However, without sustainable energy sources and adequate ethical supervision, AI can seriously harm the environment and disadvantaged populations (Kelly, 2022). Vinuesa et al. (2020) examined that out of the 169 United Nations Sustainable development goals, AI can positively impact up to 134 goals and negatively impact 59 goals. The AI systems, which are powered by non-renewable resources, can significantly reduce the environmental benefits of AI.

Higher AI performance is also associated with higher energy consumption, while a large amount of global energy consumption comes from non-renewable sources such as coal and natural gas. Energy consumption for AI has a considerable carbon footprint (Chen et al., 2024a). According to some experts, GPT-3, with 175 billion parameters, consumes up to 1287 MWh of electricity and produces 552 tons of CO2 equivalent – the same amount produced by 123 gasoline-powered passenger cars per year. Determining the exact energy consumption of a single generative AI model, which includes the energy required to manufacture the computational hardware, develop and run the model, is difficult; thus, estimation is applied. This is also due to the potential fluctuation of parameter values owing to the rapid development of GenAI models.

According to Xing and Monck (2023), new AI servers will have high energy demand, which raises concerns about environmental sustainability. Experts have argued that the power and functionality of AI will grow along with energy requirements, leading to the emergence of data server farms where reliable energy is available (Johnraja et al., 2024). AI's energy demand is considered incompatible with zero emissions, as highlighted by many critics and activists promoting saving the planet and the whole population. Despite the wide scientific consensus on the impact of climate change, many people do not perceive these processes as a global threat that affects the population's different attitudes towards climate change.

In many countries, efforts are made to mitigate the impact of climate change and create a space for the environmental strategies adoption (Akanwa et al., 2019; Fawzy et al., 2020). This has also stimulated the development of the so-called green AI concepts that emphasise energy consumption, reduction of CO2 emissions from the use and training of AI models, and environmental sustainability. A wider AI ecosystem is being gradually constructed, which includes a large network of enterprises, organisations and individuals supporting the development, implementation and use of AI tools aimed at supporting and achieving sustainability (Prokop et al., 2024). The number of these green AI initiatives has been constantly growing (Bald, 2023), but their comprehensive classification is missing. Some authors have shown that green AI initiatives dominate fields such as efficient energy management and sustainable production (Wu et al., 2022; Verdecchia et al., 2023) or explored the wider implications of AI technologies for their sustainability. Some have investigated the technical aspects aimed at increasing the environmental efficiency of AI technologies; others have investigated hardware and software elements and the structure of deep learning (Lee & Kwon, 2017; Sellami and Tabbone, 2022) and deep-learning training (Tu et al., 2023), among others. Bouza et al. (2023) indicated that tools exist for monitoring energy consumption during model training and for optimising resource use to diminish their negative impact on the environment. Optimisation models also require costs quantification, which is appropriate to apply methodologies and algorithms for social and environmental sustainability (Chen et al., 2024b).

Measuring the total energy consumption of AI model production is especially difficult because the enterprises producing these models do not track these parameters. Nevertheless, studies quantifying the environmental impacts of AI have emerged (Vinuesa et al., 2020). Wu et al. (2022) examined the carbon footprint across the whole life cycle of model development, hardware lifetime, operational procedures and manufacturing phases. To reduce the total carbon footprint, the device's whole life cycle must be examined, and energy-efficient practices must be used in deep-learning training (Mehlin et al., 2023). Supporting green AI initiatives is required for a greener and more sustainable future and technological development. Some authors have considered introducing energy consumption as a success metric in deep learning for the purpose of green AI (Bae & Ha, 2021). Rohde et al. presented an evaluation framework with the 19 sustainability criteria for sustainable AI. At the same time, growing evidence indicates that green AI initiatives have been making substantial progress and creating new opportunities for enterprises developing AI models (Amankwah-Amoah et al., 2024; Feuerriegel et al., 2024; Sedkaoui & Benaichouba, 2024). This is gradually creating a relationship between AI enterprises and sustainability, as well as social responsibility, which is has not been adequately addressed within the framework of AI development.

These consistent facts declare a strong interest of both the government and technological sphere for a deeper investigation of the environmental impact and impact of GenAI and at the same time, defining the clear role of actors in the digital ecosystem. The human – user of GenAI – finds themselves in a critical and visible position, generating increasing demand for green AI; thus, they can directly influence the environmental sustainability of AI development. In other sectors, environmental thinking and consumer behaviour have become a common phenomenon with so-called standard support tools and policies; conversely, in the field of information technology and innovative technologies, this depends on many cognitive and psychosocial aspects. It is also influenced by the attitudes and experiences of users with AI technologies in both work and private life. The investigation of these aspects defines a substantial research gap that this study attempts to fill, and the conceptual side and multidimensional levels of investigation of behavioural aspects in relation to GenAI tools represent the study's originality. No research studies have been conducted with this definitional framework and in this process structure so far, which provides a space for subsequent research and the potential for targeted policy development.

Theoretical backgroundGenAI using large language models has a strong and deep impact on every field of society, which distinguishes it from traditional communication systems such as interpersonal communication or mass media. According to Chen et al. (2024a), GenAI strengthens or weakens the position of the various social groups and raises the question of ensuring fair benefits for all the members of society. Concerns about the effects of GenAI on people and society are diverse and have been constantly growing. For instance, concerns have begun to arise about the loss of the critical thinking skills needed to recognise errors or bias in AI responses, as well as the fact that computers will gradually be considered more unbiased than human, leading people to unconditionally accept information generated by AI. Some concerns have also emerged about the population's strong dependence on AI, which people will lose the ability or motivation to think independently, as well as concerns that inconvenient information will be considered false, or concerns about the decline in the whole society's intelligence level. For instance, accepting answers prepared in advance can reduce the population's motivation to force the programme to search for the right answers.

Cave and Dihal (2019) classified the elementary fears and hope of using AI into four categories, each containing a hope and parallel fear related to control: the hope of living much longer and the fear of losing identity, the hope of living without work and the fear of being redundant, the hope of fulfilling all the human desires with the help of AI and the fear of human redundancy, the hope of AI power over others and the fear that AI will turn against humans. AI remains highly ambiguous, both in political and cultural contexts. Without defining the ethical and technological boundaries of AI, managing and interacting with AI systems will be particularly difficult (Stahl et al., 2022).

The wider societal impacts of AI have begun to be perceived critically by many people as they are related to wider economic and social issues (Mikalef et al., 2022). Many people also have exaggerated expectations from the use of AI and its strong impact on the various aspects of life – especially regarding employment and energy consumption (Dwivedi et al., 2021). Nonetheless, they are exposed to information asymmetry and missing information. The strong potential of AI has also defined endangered professions, such as doctors, lawyers, journalists, psychologists, artists, and service providers, meaning a strong intervention in labour market policy and the restructuring of human resources.

Use of genai in relation to climate change perceptionTechnological development also brings new perspectives on environmental policy, which is evident from the development of opposing attitudes towards technologies and their impacts (Leipold et al., 2019; Alkaf et al., 2023). Various emerging activist groups promote negative perceptions of AI, criticise mainstream climate programs and provoke resistance and antireflective tendencies towards environmental policies and issues. Some extreme views present climate change as a scam (Lewandowsky, 2021). Several studies have warned of the risks of organised contrarianism, which enables the targeted spread of disinformation about climate change (Coan et al., 2021; Almiron et al., 2023). This can cause confusion in public perception, deepen political polarisation and weaken efforts to adapt tools to mitigate the impacts of climate change.

The spread of climate change disinformation can also be created and financed by a network of actors, politicians, media, sponsors – industry and energy sectors – and contrarian online bloggers (Treen et al., 2020). The absence of regulation, as well as the high level of anonymity of social platforms, creates the conditions for the spread of anti-mainstream content about climate change (Vasiljev, 2024). The lack of enforceable regulation of AI on a global scale may worsen racist and nationalist prejudices, leading to countless economic, social and environmental damage (Vinuesa et al., 2020). Pearce et al. (2019) recommended studying blogs to understand climate change disinformation that spreads quickly and effectively through social networking platforms and thus filter and eliminate forms of climate disinformation dissemination. Nowadays, an interdisciplinary approach and collaboration of many stakeholders are rudimentary to develop effective solutions to eliminate harmful online disinformation processes (Saura et al., 2023).

The development, deployment and use of AI can be perceived differently by the population and how this perception can be altered over time and under the influence of various socio-economic factors remain unclear. A completely elementary view of the perception of AI is its association with strong optimism or deep pessimism, as presented by Cave and Dihal (2019). This extreme also explains the visible gap between common AI narratives and the expected impacts of AI on society (Hudson et al., 2023), as well as the need for the population's environmental literacy.

Recently, the role of GenAI in engaging with social issues such as climate change, racial justice, geopolitical issues, and health inequality, has been increasingly explored (Chatterjee, 2024). Few studies have examined how GenAI can help address these topics across diverse user groups. Chen et al. (2024b) reflected on this fact and applied an extensive audit of algorithms to evaluate dialogues between the third version of ChatGPT and participants with different perspectives on climate and racial justice issues as well as with different user experiences and conversational styles based on socio-demographic factors. The outcomes showed that users with less education were less likely to engage in AI conversations, while more educated respondents showed significant changes in the attitudes towards climate change. Thus, if AI is designed to be inclusive in relation to the different educational and other socio-economic parameters, it can be an effective tool to change public perspectives and attitudes and can also have strong educational potential (Galaz et al., 2021).

The preferences and perceptions of AI aspects vary among users. Ioku et al. examined the preference parameters for selecting AI assistants such as transparency, environmental sustainability, and performance. Respondents preferred transparency over performance but performance over environmental sustainability. Future-oriented consumers placed greater emphasis on environmental sustainability than present-oriented consumers. Sarathchandra and Haltinner (2021) explored the perceptions of the population that is sceptical of climate change and believes that it is a hoax. They confirmed the influence of socio-demographic and socio-political factors on climate scepticism. Sceptics were more likely to be male, politically conservative, older, more religiously oriented, better educated and with higher income levels.

Some studies have also pointed to the importance of investigating the implications of GenAI on electronic waste and its management strategies. According to Wang et al. (2024), implementing circular economy strategies along the green AI value chain could reduce electronic waste generation by 16 % to 86 %, increasing the importance of proactive electronic waste management regarding green AI technologies.

Alzoubi and Mishra (2024) summarised the green AI initiatives to date into six main areas: cloud-based optimisation tools, model efficiency tools, carbon footprint tools, sustainability tools, open-source initiatives and green AI communities. Kirkpatrick et al. (2024) confirmed that government organisations are already beginning to recognise the importance of green AI, even though specific regulations are not yet in place. The European Union has also taken a proactive stance towards AI policies. From the perspective of the mandate of international organisations such as the United Nations and Organisation for Economic Co-operation and Development, clear efforts are being made to create global standards for AI governance.

Some authors have pointed to the need to quantify and take into account the social and environmental impacts of GenAI – such as energy and resource consumption, working conditions and differences in accessibility – and criticise their insufficient consideration in development and deployment (Hosseini et al., 2024). Not all social and environmental impacts are clear and quantifiable; who should manage the calculation and mitigation of these impacts and what tools and mechanisms are appropriate to use remains unclear.

Environmental impact of data centres and their influence on changing behaviour of GenAI usersIn relation with the digital transformation of the global economy, data generation and processing using cloud computing, big data analytics and online services, which are essential for many financial and business operations, have been increasing (Edwards et al., 2024). Energy-intensive applications also put strong pressure on data centres and the expansion of their capacities. The data centres all over the world are >6000, and their annual growth in the future is expected to be 15 %. One-third-of them are localised in the United States (Brocklehurst, 2021; Hogan, 2023). Recently, research studies have promoted the importance of data centres within digital infrastructures (Johnson, 2019; Ortar et al., 2022) and presented this as an interdisciplinary research field (Ávila-Robinson & Sengoku, 2017; Nowakowski et al., 2019; Saura et al., 2022). The rapid expansion of data centres is also associated with an increase in energy consumption. While an estimated share of 2.1 % to 3.9 % of global carbon emissions is contributed by information communication technologies, data centres account for an estimated share of 45 % (Dobbe & Whittaker, 2019; Freitag et al., 2021).

AI tools associated with deep learning and generative models require huge computing resources associated with energy-intensive hardware, graphics processors and tensor processing units, which consume much more energy than traditional processors (Ermakov, 2024). The growing needs of AI data centres can be risky in relation to the existence of energy demands of other sectors and various electrified industries. According to Dhar et al. (2022), the emissions produced by a data centre can vary by up to 40 times depending on the local network's energy sources. Hence, countries must create energy strategies that ensure investments in the modernisation of energy infrastructure and networks and thus also integrate renewable energy, so that energy is not only reliable but also affordable.

Data centres will be more deeply integrated into everyday life and will need to adapt quickly to manage the rising volume of information. Training AI models – especially deep-learning and generative models – requires processing large data sets over long time periods and with continuous calculations on thousands of high-performance graphics processing units and tensor processing units. The computational and energy demands of AI will grow exponentially, making AI technologies one of the most energy-intensive technologies. AI is now part of the most important sectors, such as healthcare, finance, defence and services. Analytical reports confirmed that since the year 2020, global demand for electricity in data centres has grown at an average annual rate of 20 % and exceeded 400 TWh by 2023. Currently, energy consumption in data centres represents approximately 1.6 % of global electricity consumption. According to the latest projections (Data Age, 2025), global data volumes could reach 335 ZB by the year 2030 – up from 64 ZB in the year 2020. Some scenarios for data centres and AI predict a substantial increase in electricity demand from 414 TWh in 2023 to 770 TWh to 1560 TWh by 2030 (Ermakov, 2024), with the most realistic scenario predicting a 174 % increase to 1135 TWh by 2030. This increase represents a critical challenge for energy systems. Hence, ensuring the continuous operation of data centres and meeting their growing energy needs will be necessary. Anderson (2024) reports global energy consumption of data centres in 2022 at approximately 460 TWh and expects it to double to >1000 TWh by 2026, which is, for instance, approximately equal to the total electricity consumption of Japan.

The latest results published in the Gas Outlook 2050 (Naderian et al., 2024) highlighted an increase in energy demand from data centres and AI and, at the same time, an increase in uncertainties related to the problematic estimation of the extent of their growth. So far, no analyses have been conducted on this issue, but various scenarios have been developed based on the forecasts of the development of data centres and AI by 2030. Many factors influencing the progress of data centres might not have yet been identified. They arise simultaneously with technological development and increasing global pressure for the intelligence of households, cities and industries. A steep increase in data generation is also expected, requiring considerable computing power. This fact raises concerns that subscribers will have to pay higher electricity rates to accommodate the development of AI, regardless of whether they obtain direct benefits. Big data, machine learning model developments and AI will play an important role in the energy market in the future (Ahmad et al., 2021).

Position of AI literacy in the concept of green artificial intelligenceArif and Changxiao (2022) confirmed that AI literacy and subjective AI norms can positively influence the population's attitudes towards GenAI technologies and their intention to use GenAI. According to many experts, the environmental dimensions belong among the ethical dimensions. Environmental ethicists highlight the non-environmental dimensions of AI, such as its environmental footprint, as part of the ethical dimensions of AI and consider them an important factor in AI adoption.

Frank et al. (2021) explored the differences between technologies, consumers and countries in generating demand for AI products but did not rely on environmental considerations to examine the effects of utility on demand for AI products. Yigitcanlar et al. (2024) examined the drivers of public perception of AI, confirming that perceptions of AI may vary by local context. The most important factors influencing public perception of AI include sex, age and AI knowledge and experience. Although AI literacy contributes to building trust and minimising public concerns about AI, the impact extent on trust and adoption of AI may differ across geographical and socio-economic contexts.

Galaz et al. (2021) warned about the systemic risks of AI sustainability owing to the quick spread of the AI technologies into new social, economic and ecological contexts and thus, indirectly draws attention to the rising demand for AI literacy and the necessary systemic process of its development. The conceptualisation level in the development of AI literacy will be demanding not only from procedural and technological but also legislative and legal aspects. Unexpected social and ecological effects have been insufficiently investigated so far, and recently, the potential social, economic and ecological risks of AI might have been overlooked. The increase in these risks is related to the increased interconnectedness among people, machines and socio-ecological systems.

Asrifan et al. (2025) examined the importance of AI literacy for eliminating these reported risks and ensuring certainty, increasing ethical awareness and informed decision-making. According to the authors, AI literacy should include a comprehensive understanding of AI principles, including machine learning, data governance and algorithmic ethics, allowing users to engage with AI technologies in various sectors and perceive the environmental demands of AI.

Kong et al. (2023) emphasised the importance of promoting AI literacy in the educated population and critically highlighted that research on effective AI literacy programmes’ contents is insufficient. According to the authors, AI literacy represents a multi-conceptual framework consisting of multiple dimensions, including users’ ethical awareness.

Ooi et al. (2023) explored the dynamic field of AI applications, including GenAI, and pointed to the need for a strategic, multi-level approach to increasing AI literacy. They did not explicitly focus on AI literacy in relation to the energy intensity of AI, but the ethical dimension in the proposed AI literacy strategies may consist of these aspects via their integration. Adnan et al. (2024) argued that research on the environmental impacts of AI and big data is still in its infancy and that the societal benefits of AI may support the achievement of sustainable development goals that may not be in line with environmental sustainability. The number of electronic devices used via AI has raised considerably, increasing serious concerns about environmental risks. Hence, building a trustworthy AI ecosystem is necessary.

Scantamburlo et al. (2024) observed that public perception of AI is inappropriate because it is based on a lack of understanding of AI technologies and their impact, a lack of relationships between AI technologies and policy initiatives, and a low level of interest in increasing AI literacy. Supporting AI literacy is one of the key factors in building a trustworthy AI ecosystem.

While AI literacy systems are important for enhancing the perception and evaluation of the environmental aspects of GenAI, other supporting mechanisms, which can quantify and compare many environmental aspects of AI and thus improve the use of AI literacy effects, are also important for improving awareness, trust and acceptance of AI. In this context, Gupta et al. (2024) drew attention to the effects of new metrics and methodologies that should facilitate public acceptance of green AI and pointed to awareness of sustainable practices in the AI life cycle.

Green AI projects that support energy-efficient algorithms, environmentally friendly hardware design and clean performance in AI infrastructure can reduce the negative environmental impacts of AI models (Alzoubi & Mishra, 2024; Malkova, 2025). Stricter environmental regulation from government and regulatory authorities can also provide considerable support for green AI, which can also stimulate the development of green projects (Polyakov et al., 2021; Radavičius & Tvaronavičienė, 2022). Some authors explored strategies to diminish the ecological footprint of AI models (Wang et al., 2023; Chauhan et al., 2024) and the effectiveness of innovative green projects (Levický et al., 2022; Piccinetti et al., 2023). Sponsoring green projects can attract environmentally oriented supporters, investors and consumers and thus improve the image of these socially responsible entities. This will contribute to building trust and loyalty among institutions and stakeholders that prioritise environmental interests in the development and use of AI.

Recent technological development – including the large language model DeepSeek – is strong evidence of the importance of AI literacy development in relation to environmentalism, the AI energy consumption issue and trust in AI. DeepSeek has brought new insights into the AI sector development as well as its sustainability in relation to global environmental goals.

Future development of large language models regarding AI environmental sustainabilityIn 2023, the release of the large language model DeepSeek was announced. DeepSeek can redefine the technological landscape by making advanced capabilities accessible to insufficiently represented communities while strongly promoting not only ethical but also inclusive innovation (Peng et al., 2025; Sallam et al., 2025). This openness also brings many existential risks. At the same time, the release of DeepSeek has generated strong discussions about the computational and energy demands of GenAI (Normile, 2025).

Conventional thinking based on the premise that building the biggest and best new AI models requires much hardware – and hence, energy – has been overturned by DeepSeek. DeepSeek models require considerably less energy to achieve the same performance as the other models with similar performance. This fundamentally changes the focus of AI development from model performance to resource efficiency, while many experts state that it has occurred earlier than expected (Roumeliotis et al., 2025). However, the increased efficiency of large language models may not automatically lead to a reduction in overall energy consumption as AI experts fear that innovations related to DeepSeek, through their everyday integration into computing, will support its greater development (Parmar & Govindarajulu, 2025). This is also based on the advantages of DeepSeek itself; for instance, unlike DeepSeek, the ChatGPT code in its new models is closed-source, that is, not publicly available for replication or use by others. DeepSeek lowers the barriers to entry for start-ups and supports competition and AI democratisation (Krause, 2025). Technological enterprises that will be inspired by the DeepSeek approach can create their own similar low-cost models; thus, the assumptions for decreasing energy consumption in the future are completely lost. Several experts predict that even if DeepSeek has achieved energy efficiency, it will not reduce the overall energy consumption of GenAI as much as the AI sector had expected in the long term (Gupta, 2025).

AI enterprises have begun to spend more on training models and, hence, to consume more energy because it brings them higher profit. Thus, the development of more intelligent models is limited primarily by enterprises’ financial resources (O'Donnell, 2025). Therefore, regarding DeepSeek's rise, a more intense discussion has begun at many expertise levels about the energy consumption of AI and its further development conditioned by energy intensity and sustainability (Cappendijk et al., 2024).

Many technology studies published by the Massachusetts Institute of Technology Review call for a more detailed examination of the energy costs of DeepSeek and similar models that will play a significant role in the decision-making processes related to the development of GenAI in the near future (O'Donnell, 2025). These aspects have been already explored by Jagannadharao et al. (2023), who created an overview of the trade-offs between emission reduction and operational load while calling for the need for progressive development of carbon-sensitive computing. Many other studies have also drawn attention to the important issue of exploring sustainable computing and supporting the creation of environmentally aware strategies for training large language models (Liu et al., 2024; Gupta, 2025;).

These aspects are crucial in defining the main goal of this study and designing this research, which it is considered critical to investigate public awareness of the AI environmental aspects that may play a decisive role in the development, adoption and implementation of GenAI tools in the future. The study outcomes also support the development of a platform for an AI literacy system, which, in the conditions of the development of massive AI models, will play a key role in adopting AI tools in countries’ digital ecosystem and thus gaining decisive competitive power on a global scale.

Research formulationThe review of the research studies presented is clear evidence of their considerable fragmentation, which is related to their target setting as well as to the defined research framework and other specific research parameters. This reduces their comparative potential, mainly owing to their methodological and data heterogeneity as well as the causal relationships investigated. The common elements of these studies are the appeal for a systematic investigation of the perception of environmental issues in the field of technological development and the assignment of new roles to users of AI tools as subjects influencing the sustainability of AI tools and technologies development. Many research studies have pointed to the strong potential of AI literacy systems and their future role in changing user behaviour.

Based on the literature review, the following research questions are formulated:

- –

research question 1: What are the Czech population's relations between use of GenAI and perception of climate change?

- –

research question 2: How willing is the Czech population to accept a change in behaviour owing to the environmental impact of data centres or GenAI in the digital media ecosystem?

- –

research question 3: What is the relationship between AI literacy and declared behavioural change owing to the environmental demand of GenAI?

This paper comprises six sections. Firstly, the Introduction addresses the discussed field of AI as a high technology of the future. Secondly, the literature review offers the theoretical background for all three research questions structured according to them with their formulation at its end. Thirdly, the Data and Methodology section describes the data set and the employed methodological techniques, whose outcomes are demonstrated in the fourth analytical section, which is also built according to the research questions. Fifthly, the Discussion compares the obtained findings with other research studies. Finally, the Conclusion summarises all the outcomes and makes suggestions for future research.

Data and methodologyDataThe data set encompassed several groups of examined questions from the questionnaire. The interviewees responded to four groups of statements.

The following questions were included in the first group:

- –

AI1: How often do you currently use the ChatGPT system?

- –

AI2: Have you tried the Gemini system based on AI?

- –

AI3: Have you tried the Microsoft Copilot system based on AI?

- –

AI4: Have you tried the Midjourney system based on AI?

- –

AI5: Have you tried the DALL-E system based on AI?

- –

AI6: Have you tried the Stability AI system based on AI?

- –

AI7: Have you tried the Wombo AI system based on AI?

- –

AI8: Have you tried the Canva system based on AI?

- –

AI9: Have you tried the Synthesis system based on AI?

- –

AI10: Have you tried the Murf AI system based on AI?

The possible responses to the first question were very often, often, sometimes and not currently, while the responses to the remaining questions were yes or no.

The second group of questions was related to environmental issues:

- –

E1: How important is solving the climate change issue for you personally?

- –

E2: Choose one of the following statements related to climate change that is the closest one to your attitude.

- –

E3: How well do you know what a data centre is and what it serves for?

- –

E4: After learning more details about the environmental impact of technology enterprises’ data-centre operations, how willing would you be to take the next steps?

The first question was answered on a scale of 1 to 7, with the lowest value showing the highest importance and the highest value showing the lowest importance.

For the second question, the respondents could select among the following options:

- –

option 1: Climate change is already considerably affecting life around me.

- –

option 2: Climate change will considerably affect life around me in the next five years.

- –

option 3: Climate change will considerably affect the life around me in the next 6 to 10 years.

- –

option 4: Climate change will considerably affect life around me in the next 11 to 25 years.

- –

option 5: Climate change will not considerably affect life around me even after the next quarter of a century.

The third environmental question was answered on a four-point scale, where the respondents selected the following options:

- –

option 1: I know it very well.

- –

option 2: I know it little.

- –

option 3: I do not know well.

- –

option 4: I do not know it at all.

The fourth environmental question E4 was answered on a seven-point scale, with the lowest value meaning to be very willing and the highest value meaning to be very reluctant to take the following steps:

- –

option 1: To change one's own email address or go to the email service provider that uses more energy-efficient and water-cooled data centres.

- –

option 2: To transfer own data to a provider that uses more energy-efficient and water-cooled data centres.

- –

option 3: To leave a favourite social network that does not use energy-efficient and water-saving opportunities in its data centres.

- –

option 4: To stop using a favourite streaming platform that does not allow energy-efficient and water-saving opportunities in its data centres.

- –

option 5: To stop using a favourite generative AI platform that does not allow energy-efficient and water-saving opportunities in its data centres.

The third group of the questions is related to the use of the social networks:

- –

SN1: How often do you use social networks, where you have created your own profile and share posts – photos and videos (for instance, Facebook, X, Instagram, TikTok, Snapchat)?

- –

SN2: How often do you use the communication platforms that allow to exchange messages and multimedia files (for instance, WhatsApp, Messenger, Telegram Messenger, Signal, iMessage, Rakuten Viber, Kik Messenger, and so on)?

The responses to these questions are on a seven-level scale, while the individual levels represent more times per day, once per day, more times per week – two to six times per week, once per week, less often than once a week and at least once a quarter, less often than once per quarter, not at all.

The fourth group represents the four series of the statements, a total of the thirty-nine statements related to the various fields of the personal perception of AI.

The first series of the statements expresses the internal personal motivation and self-confidence in learning and includes the following nine statements:

- –

SA1: Artificial intelligence is important in my everyday life (for instance, personal life, working life).

- –

SA2: I like to study the field of AI.

- –

SA3: Self-education in the field of AI enriches my life.

- –

SA4: I am interested in discovering new AI technologies.

- –

SA5: I believe that I am able to perform tasks related to AI.

- –

SA6: I am sure that I am able to handle projects related to AI well.

- –

SA7: I believe that I can gain the knowledge and skills related to AI.

- –

SA8: I am sure I will achieve good result in AI tests.

- –

SA9: I am convinced that I understand AI.

The second series of the statements demonstrates the interest in career and self-confidence in AI use and includes the following ten statements:

- –

SB1: Knowledge of AI will help me find a good job in the future.

- –

SB2: Knowledge of AI will give me an advantage in my future career.

- –

SB3: Understanding AI will contribute to my future profession.

- –

SB4: My future career will include AI.

- –

SB5: In my work, I will use skills in the field of problem solving using AI.

- –

SB6: I am able to use tools related to AI well.

- –

SB7: I am sure that I am able to perform activities involving the use of AI.

- –

SB8: I believe that I am able to perform tasks related to the involvement of AI.

- –

SB9: I believe that I will learn to understand the fundamental AI concepts.

- –

SB10: I believe that I am able to choose suitable applications of AI to solve problems.

The third series of the statements is related to the behavioural aspects and includes the following eleven statements:

- –

SC1: I will continue to use AI in the future.

- –

SC2: I will try to keep up with the latest technologies in the field of AI.

- –

SC3: In the future, I plan to devote time to explore new features of AI applications.

- –

SC4: I actively participate in educational activities focused on AI.

- –

SC5: I am passionate about studying materials about AI.

- –

SC6: I learn effectively while completing tasks.

- –

SC7: I often look for other materials about AI such as books or magazines in my free time.

- –

SC8: I often try to explain the teaching material about AI to my classmates, colleagues, or friends.

- –

SC9: I try to collaborate with classmates, colleagues, or friends to complete tasks and projects focused on AI.

- –

SC10: In my free time, I often discuss AI with classmates, colleagues, or friends.

- –

SC11: When I run into a problem in the activities related to AI, I usually ask classmates, colleagues, or friends for help.

The fourth series of the statements is related to the cognitive aspects and evaluation and includes the following nine statements:

- –

SD1: I know what AI is and I remember its definitions.

- –

SD2: I know how to use AI applications (for instance, Siri, chatbots).

- –

SD3: I know some of the fundamental principles of how AI works (for instance, linear model, decision tree, machine learning).

- –

SD4: I understand how AI perceives the world (for instance, seeing, hearing) to solve various tasks.

- –

SD5: I am able to compare different concepts of AI (for instance, deep learning, machine learning).

- –

SD6: I am able to apply AI to solve problems.

- –

SD7: I am able to create a machine learning model to solve problems.

- –

SD8: I am able to solve problems through involving AI (for instance, chatbots, robotics).

- –

SD9: I am able to evaluate applications and concepts of AI for different situations.

Each of the listed statements is answered on a seven-level scale from 1 to 7, while the lowest value represents strong agreement and the highest value means strong disagreement to the particular statement.

Inspiration for construction of the above-listed questions comes from several studies – questioning data centre attitudes (Seth, 2024) and the statements about AI (Gursoy et al., 2019; Yigitcanlar et al., 2024).

MethodologyThe data set was collected through an online survey conducted by a professional surveying agency in the Czech Republic from 18 October 2024 to 23 October 2024. A total of 2710 respondents were addressed, of which 1268 returned the completed survey. Overall, 1252 questionnaires were answered by respondents aged over 18 years. The respondents’ selection was based on a quota selection. Data collection was conducted through computer-assisted self-interviewing via website populace.cz and was supervised by the agency Ipsos. The average period for answering the questionnaire was about 30 min. The main methodological technique for analytical processing in this study was regression analysis (Galton, 1989), specifically logistic regression. To adopt this technique, testing was performed. A chi-squared test was employed to reveal the distribution state of the input data (Kenney, 1939; Pearson, 1893) and uncover inner relationships between the examined variables. Confidence intervals were determined at a level of 95 %. A five-per-cent statistical significance threshold was employed to evaluate the determined hypotheses. Robustness was checked via the information criteria – namely, the Akaike information criterion and the Bayesian information criterion. All analytical procedures were conducted in the R software environment (R Core Team, 2024).

Regarding the visualisation of the analytical outcomes in the following section, highlighting particular cells in shades of grey via shading, the darker the grey colour, the more statistically significant the outcome. That is, only statistically not significant outcomes remain in white colour, and all highlighted cells represent statistically significant outcomes. The levels of statistical significance visualised are four – 10 %, 5 %, 1 %, and 0.1 %. In the case of the Akaike information criterion and Bayesian information criterion, highlighting was performed in a similar way, meaning the darker the shade, the more suitable the outcome. Here, the values are sorted from the highest value – that is, the darkest shade to the lowest value, which is the lightest shade.

AnalysisThe analytical section comprises three subsections based on the individual RQs. Each subsection is divided into the testing and modelling phase.

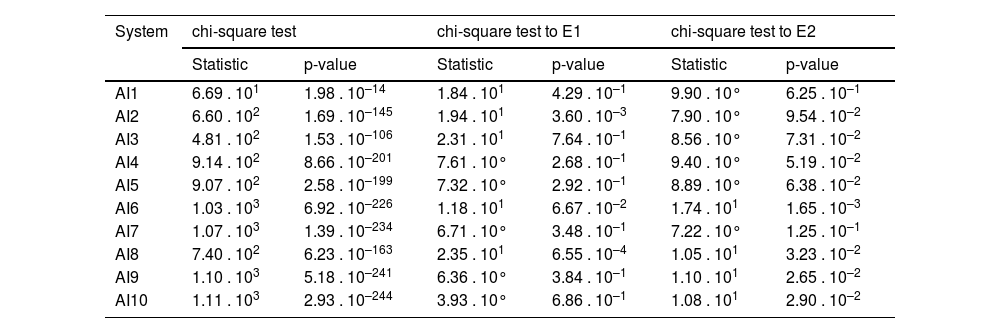

Perception of climate changeThe RQ 1 is linked to the Czech population's relations between the use of GenAI and perception of climate change. Four questions from the questionnaire are interconnected: frequency of ChatGPT current use, use of the other GenAI systems, personal importance of the climate change solution and period of climate change. Table 1 demonstrates the systems testing and their relationships to the respondents’ environmental attitudes.

Perception of climate change testing.

| System | chi-square test | chi-square test to E1 | chi-square test to E2 | |||

|---|---|---|---|---|---|---|

| Statistic | p-value | Statistic | p-value | Statistic | p-value | |

| AI1 | 6.69 . 101 | 1.98 . 10–14 | 1.84 . 101 | 4.29 . 10–1 | 9.90 . 10° | 6.25 . 10–1 |

| AI2 | 6.60 . 102 | 1.69 . 10–145 | 1.94 . 101 | 3.60 . 10–3 | 7.90 . 10° | 9.54 . 10–2 |

| AI3 | 4.81 . 102 | 1.53 . 10–106 | 2.31 . 101 | 7.64 . 10–1 | 8.56 . 10° | 7.31 . 10–2 |

| AI4 | 9.14 . 102 | 8.66 . 10–201 | 7.61 . 10° | 2.68 . 10–1 | 9.40 . 10° | 5.19 . 10–2 |

| AI5 | 9.07 . 102 | 2.58 . 10–199 | 7.32 . 10° | 2.92 . 10–1 | 8.89 . 10° | 6.38 . 10–2 |

| AI6 | 1.03 . 103 | 6.92 . 10–226 | 1.18 . 101 | 6.67 . 10–2 | 1.74 . 101 | 1.65 . 10–3 |

| AI7 | 1.07 . 103 | 1.39 . 10–234 | 6.71 . 10° | 3.48 . 10–1 | 7.22 . 10° | 1.25 . 10–1 |

| AI8 | 7.40 . 102 | 6.23 . 10–163 | 2.35 . 101 | 6.55 . 10–4 | 1.05 . 101 | 3.23 . 10–2 |

| AI9 | 1.10 . 103 | 5.18 . 10–241 | 6.36 . 10° | 3.84 . 10–1 | 1.10 . 101 | 2.65 . 10–2 |

| AI10 | 1.11 . 103 | 2.93 . 10–244 | 3.93 . 10° | 6.86 . 10–1 | 1.08 . 101 | 2.90 . 10–2 |

Source: Own elaboration by the authors.

As visualised by Table 1, every examined system meets the criterion of random distribution of probability with a large margin itself. Moreover, the general question related to frequency of ChatGPT use shows the same outcome. Regarding the relationship between the particular GenAI systems and the importance of solving the climate change issue, outcomes vary. While the relationships between the use of Google Gemini, Microsoft Copilot and Canva and the importance of solving the climate change issue are assigned random distribution of probability, other systems, among which are Midjourney, DALL-E, Stability AI, Wombo AI, Synthesis and Murf AI, demonstrate bias that requires further investigation. However, from the perspective of the relationship between the systems and the climate-change impact period, more systems carry bias. While Google Gemini, Microsoft Copilot, Midjourney, DALL-E and Wombo AI show random distribution of probability, Stability AI, Canva, Synthesis and Murf AI point to certain bias. All systems demonstrating random distribution only slightly overstep a five-per-cent statistical significance threshold with the exception of Wombo AI, which oversteps a ten-per-cent statistical significance threshold to a little extent. Conversely, Canva, Synthesis and Murf reach a statistical significance threshold of almost 5 %. From both perspectives, these outcomes need to be further investigated.

The analytical outcomes demonstrate the following findings. The frequency of use of ChatGPT has an impact on the perception of the importance of solving climate change. Other GenAI systems show that for Google Gemini, Microsoft Copilot and Canva, no relation exists between the frequency of use of ChatGPT and the perception of the importance of solving climate change. Other systems, such as Midjourney, DALL-E, Stability AI, Wombo AI, Synthesis and Murf AI, are not determined in this field. An examination of the relationship between the frequency of use of ChatGPT and all studied GenAI systems with the climate change periods shows that the frequency of use of Google Gemini, Microsoft Copilot, Midjourney, DALL-E and Wombo AI does not affect the perception of the expected climate change.

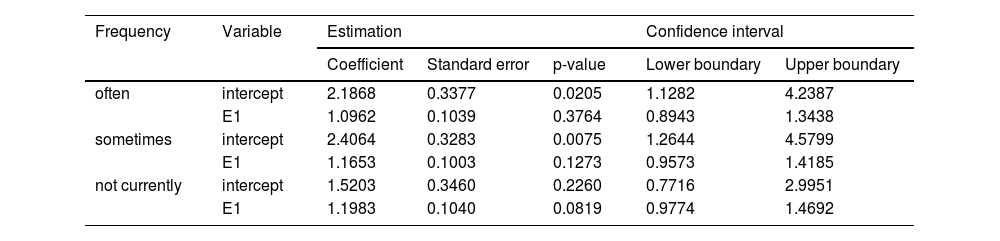

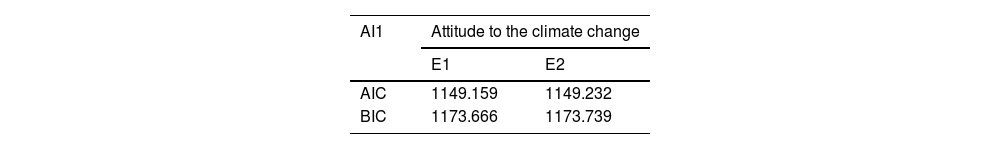

The regression models are offered in the subsequent tables, beginning with the frequency of ChatGPT use to the importance of the climate change issue logistic regression model in Table 2.

The frequency of ChatGPT use to the importance of the climate-change issue regression model.

| Frequency | Variable | Estimation | Confidence interval | |||

|---|---|---|---|---|---|---|

| Coefficient | Standard error | p-value | Lower boundary | Upper boundary | ||

| often | intercept | 2.1868 | 0.3377 | 0.0205 | 1.1282 | 4.2387 |

| E1 | 1.0962 | 0.1039 | 0.3764 | 0.8943 | 1.3438 | |

| sometimes | intercept | 2.4064 | 0.3283 | 0.0075 | 1.2644 | 4.5799 |

| E1 | 1.1653 | 0.1003 | 0.1273 | 0.9573 | 1.4185 | |

| not currently | intercept | 1.5203 | 0.3460 | 0.2260 | 0.7716 | 2.9951 |

| E1 | 1.1983 | 0.1040 | 0.0819 | 0.9774 | 1.4692 | |

Source: Own elaboration by the authors.

As seen in Table 2, the importance of the climate-change issue is modelled via the frequency of ChatGPT use. Respondents who use ChatGPT often see the importance of the climate change issue at a lower level and vice versa. The odds that respondents who use ChatGPT often consider the climate-change issue less important are 9.62 % higher. Thus, the odds that respondents who use ChatGPT only sometimes consider the climate-change issue less important are 16.53 % higher. Finally, the odds that respondents who do not use currently ChatGPT consider the climate-change issue less important are 19.83 % higher. These outcomes show a visible inverse proportion, that is, the more often the respondents use ChatGPT, the less they consider the climate-change issue important.

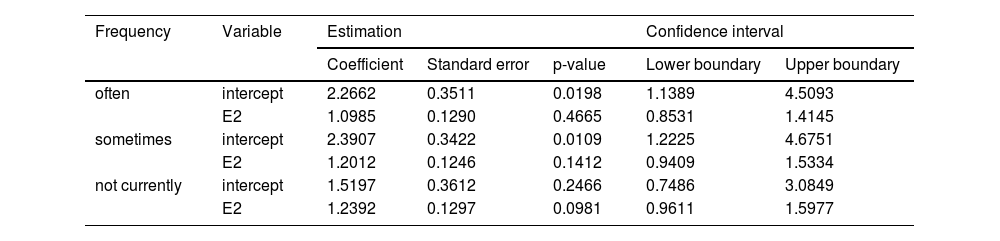

Table 3 demonstrates the relationship between frequency of ChatGPT use and the climate-change impact period via the logistic regression model.

The frequency of ChatGPT use to the climate-change impact period regression model.

| Frequency | Variable | Estimation | Confidence interval | |||

|---|---|---|---|---|---|---|

| Coefficient | Standard error | p-value | Lower boundary | Upper boundary | ||

| often | intercept | 2.2662 | 0.3511 | 0.0198 | 1.1389 | 4.5093 |

| E2 | 1.0985 | 0.1290 | 0.4665 | 0.8531 | 1.4145 | |

| sometimes | intercept | 2.3907 | 0.3422 | 0.0109 | 1.2225 | 4.6751 |

| E2 | 1.2012 | 0.1246 | 0.1412 | 0.9409 | 1.5334 | |

| not currently | intercept | 1.5197 | 0.3612 | 0.2466 | 0.7486 | 3.0849 |

| E2 | 1.2392 | 0.1297 | 0.0981 | 0.9611 | 1.5977 | |

Source: Own elaboration by the authors.

Table 3 shows a clear pattern. Respondents who use ChatGPT often see the climate-change impact period further in the future, meaning the climate change is not happening yet, and vice versa. The odds that respondents who use ChatGPT often consider the climate-change impact period later in the future are 9.85 % higher. The odds that respondents who use ChatGPT only sometimes see the climate-change impact period later in the future are 20.12 % higher. Finally, respondents who do not currently use ChatGPT consider the climate-change impact to happen in the future, with odds 23.92 % higher. The outcomes demonstrate that the more often the respondents use ChatGPT, the later they see climate change happening.

Environmental impact of data centresThe RQ 2 is based on the Czech population's willingness to accept a change in behaviour owing to the environmental impact of data centres or GenAI in the digital media ecosystem. It includes a mixture of six interconnected environmental and sociological questions. With regard to the previously examined importance of climate-change issue perception and climate-change impact period, two other questions investigating sharing via social networks and communicating via various platforms are added.

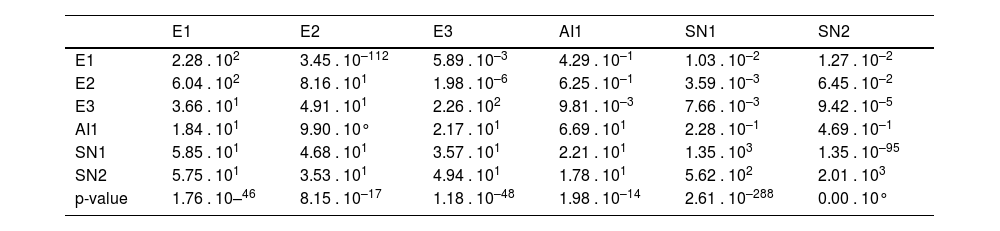

The matrix of the testing outcome of the relationships between environmental and social network attitudes is offered in Table 4.

Testing the environmental impact in the digital media ecosystem.

| E1 | E2 | E3 | AI1 | SN1 | SN2 | |

|---|---|---|---|---|---|---|

| E1 | 2.28 . 102 | 3.45 . 10–112 | 5.89 . 10–3 | 4.29 . 10–1 | 1.03 . 10–2 | 1.27 . 10–2 |

| E2 | 6.04 . 102 | 8.16 . 101 | 1.98 . 10–6 | 6.25 . 10–1 | 3.59 . 10–3 | 6.45 . 10–2 |

| E3 | 3.66 . 101 | 4.91 . 101 | 2.26 . 102 | 9.81 . 10–3 | 7.66 . 10–3 | 9.42 . 10–5 |

| AI1 | 1.84 . 101 | 9.90 . 10° | 2.17 . 101 | 6.69 . 101 | 2.28 . 10–1 | 4.69 . 10–1 |

| SN1 | 5.85 . 101 | 4.68 . 101 | 3.57 . 101 | 2.21 . 101 | 1.35 . 103 | 1.35 . 10–95 |

| SN2 | 5.75 . 101 | 3.53 . 101 | 4.94 . 101 | 1.78 . 101 | 5.62 . 102 | 2.01 . 103 |

| p-value | 1.76 . 10–46 | 8.15 . 10–17 | 1.18 . 10–48 | 1.98 . 10–14 | 2.61 . 10–288 | 0.00 . 10° |

Source: Own elaboration by the authors.

Table 4 represents a matrix whose lower half demonstrates test statistics values and upper half p-values. From an individual evaluation point of view, all environmental and social network questions are assigned random distribution of probability. Regarding the mutual pairs of the tested questions, the frequency of ChatGPT use demonstrates more occasions of biased distribution. Its relationship to the importance of solving the climate-change issue, the climate-change impact period, as well as the data centre purpose and sharing on the social networks’ profiles point to biased relationships. All other pairs demonstrate random distribution of probability, with the exception of the pair involving the climate-change impact period and frequency of online communication, whose p-value of 6.45 . 10–2 stands only slightly over a statistical significance threshold of 5 %.

The analytical outcomes show interesting findings. No statistically significant relationship is found between the respondents’ answers to the questions about knowledge and the use of data centres in relation to the frequency of ChatGPT use, perception of the climate-change importance, and expected climate-change period. Moreover, such a relationship does not exist between the frequency of ChatGPT use and the frequency of social networks use. The same is applied to the frequency of use of communication platforms that enable sharing messages and multimedia. Conversely, a statistically significant relationship between the perception of climate-change importance and the frequency of use of ChatGPT, social networks and communication platforms. A statistically significant relationship exists between the frequency of ChatGPT use and the frequency of use of social networks and communication platforms. These outcomes also confirm no relationship between the frequency of use of social networks and the frequency of use of communication platforms.

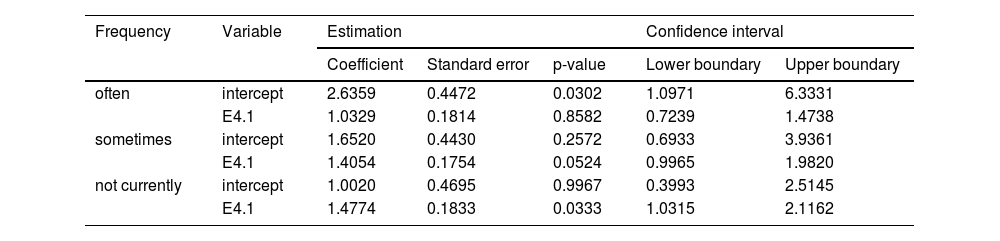

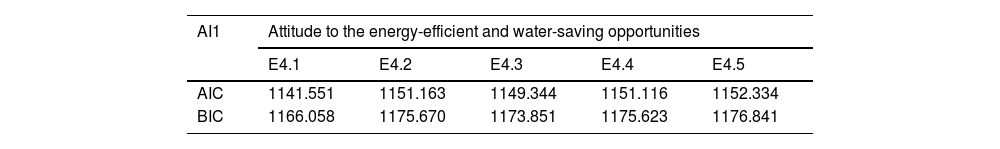

The modelling phase of the second question follows with the five statements tied to the frequency of ChatGPT use. The first one devoted to changing email address is illustrated by

The frequency of ChatGPT use to changing email address regression model.

| Frequency | Variable | Estimation | Confidence interval | |||

|---|---|---|---|---|---|---|

| Coefficient | Standard error | p-value | Lower boundary | Upper boundary | ||

| often | intercept | 2.6359 | 0.4472 | 0.0302 | 1.0971 | 6.3331 |

| E4.1 | 1.0329 | 0.1814 | 0.8582 | 0.7239 | 1.4738 | |

| sometimes | intercept | 1.6520 | 0.4430 | 0.2572 | 0.6933 | 3.9361 |

| E4.1 | 1.4054 | 0.1754 | 0.0524 | 0.9965 | 1.9820 | |

| not currently | intercept | 1.0020 | 0.4695 | 0.9967 | 0.3993 | 2.5145 |

| E4.1 | 1.4774 | 0.1833 | 0.0333 | 1.0315 | 2.1162 | |

Source: Own elaboration by the authors.

The decision to change email address to another provider after learning more details about the environmental impact of technology companies’ data-centre operation is demonstrated in

Table 5. The table shows an indirect dependence, meaning the lower the frequency of ChatGPT use, the higher the odds of being reluctant to change email address to another provider. Respondents who use ChatGPT often have 3.29 % higher odds of being reluctant to change email address to another provider. The odds 40.54 % higher for respondents who use ChatGPT sometimes and 47.74 % higher for respondents who do not currently use ChatGPT. The low frequency of ChatGPT use indicates the respondents’ lower willingness to change email address provider.

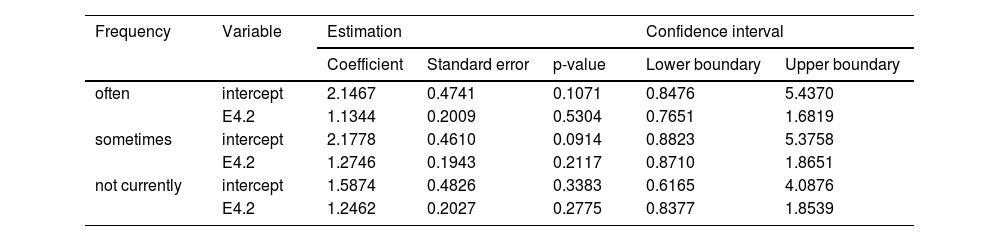

The second question, which addresses the transfer of one's own data to an energy-efficient cloud provider, is offered in Table 6.

The frequency of ChatGPT use to transferring own data to an energy-efficient cloud provider regression model.

| Frequency | Variable | Estimation | Confidence interval | |||

|---|---|---|---|---|---|---|

| Coefficient | Standard error | p-value | Lower boundary | Upper boundary | ||

| often | intercept | 2.1467 | 0.4741 | 0.1071 | 0.8476 | 5.4370 |

| E4.2 | 1.1344 | 0.2009 | 0.5304 | 0.7651 | 1.6819 | |

| sometimes | intercept | 2.1778 | 0.4610 | 0.0914 | 0.8823 | 5.3758 |

| E4.2 | 1.2746 | 0.1943 | 0.2117 | 0.8710 | 1.8651 | |

| not currently | intercept | 1.5874 | 0.4826 | 0.3383 | 0.6165 | 4.0876 |

| E4.2 | 1.2462 | 0.2027 | 0.2775 | 0.8377 | 1.8539 | |

Source: Own elaboration by the authors.

Table 6 demonstrates the outcomes of the regression model representing the relationship of the frequency of ChatGPT use and transferring own data to an energy-efficient cloud provider. It shows an almost indirect dependence, meaning that the lower the frequency of ChatGPT use, the higher the odds of being reluctant to transfer own data to an energy-efficient cloud provider. Respondents who use ChatGPT often have 13.44 % higher odds of being reluctant to transfer own data to more energy-efficient provider. These odds are 27.46 % higher for respondents who use ChatGPT sometimes and 24.62 % higher for respondents who do not currently use ChatGPT. These outcomes demonstrate that the low frequency of ChatGPT use indicates the respondents’ lower willingness to transfer own data to a more energy-efficient provider.

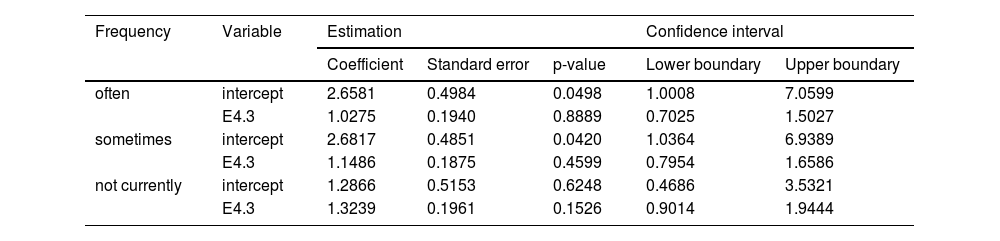

The third question, which addresses leaving a favourite social network that does not use an energy-efficient data centre, is shown in Table 7.

The frequency of ChatGPT use to leaving a favourite social network that does not use an energy-efficient data centre regression model.

| Frequency | Variable | Estimation | Confidence interval | |||

|---|---|---|---|---|---|---|

| Coefficient | Standard error | p-value | Lower boundary | Upper boundary | ||

| often | intercept | 2.6581 | 0.4984 | 0.0498 | 1.0008 | 7.0599 |

| E4.3 | 1.0275 | 0.1940 | 0.8889 | 0.7025 | 1.5027 | |

| sometimes | intercept | 2.6817 | 0.4851 | 0.0420 | 1.0364 | 6.9389 |

| E4.3 | 1.1486 | 0.1875 | 0.4599 | 0.7954 | 1.6586 | |

| not currently | intercept | 1.2866 | 0.5153 | 0.6248 | 0.4686 | 3.5321 |

| E4.3 | 1.3239 | 0.1961 | 0.1526 | 0.9014 | 1.9444 | |

Source: Own elaboration by the authors.

Table 7 illustrates the regression model demonstrating the relationship between frequency of ChatGPT use and leaving a favourite social network that does not use an energy-efficient data centre. Overall, a clear indirect dependence is exemplified, meaning the lower the frequency of ChatGPT use, the higher the odds of being reluctant to leave a favourite social network that does not use an energy-efficient data centre. Respondents who use ChatGPT often have 2.75 % higher odds of being reluctant to leave a favourite social network that does not use an energy-efficient data centre. These odds are 14.86 % higher for respondents who use ChatGPT sometimes and 32.39 % higher for respondents who do not currently use ChatGPT. The listed outcomes enable to formulate the conclusion that the low frequency of ChatGPT use demonstrates a lower tendency for the respondents to leave social a network that does not use an energy-efficient data centre.

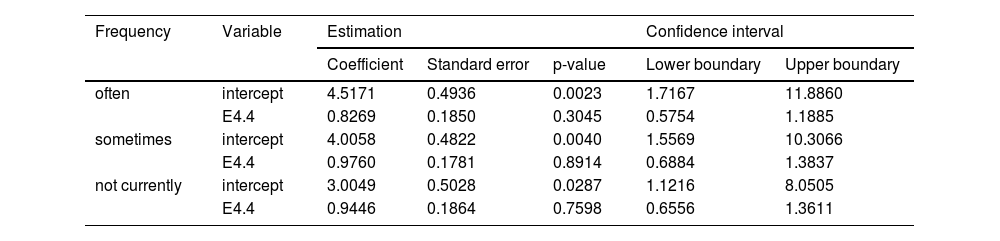

The fourth question, which addresses the situation in which one stop using a favourite streaming platform that does not use energy-efficient data centres, is shown in Table 8.

The frequency of ChatGPT use to stopping the use of a favourite streaming platform that does not use energy-efficient data centres regression model.

| Frequency | Variable | Estimation | Confidence interval | |||

|---|---|---|---|---|---|---|

| Coefficient | Standard error | p-value | Lower boundary | Upper boundary | ||

| often | intercept | 4.5171 | 0.4936 | 0.0023 | 1.7167 | 11.8860 |

| E4.4 | 0.8269 | 0.1850 | 0.3045 | 0.5754 | 1.1885 | |

| sometimes | intercept | 4.0058 | 0.4822 | 0.0040 | 1.5569 | 10.3066 |

| E4.4 | 0.9760 | 0.1781 | 0.8914 | 0.6884 | 1.3837 | |

| not currently | intercept | 3.0049 | 0.5028 | 0.0287 | 1.1216 | 8.0505 |

| E4.4 | 0.9446 | 0.1864 | 0.7598 | 0.6556 | 1.3611 | |

Source: Own elaboration by the authors.

The regression model visualised in Table 8 offers the outcomes of the regression model representing the relationship of the frequency of ChatGPT use and stopping the use of a favourite streaming platform that does not use energy-efficient data centres. All in all, it shows a partial indirect dependence, meaning that the lower the frequency of ChatGPT use, the lower the odds of being reluctant to stop using a favourite streaming platform that does not use energy-efficient data centres. Respondents who use ChatGPT often have 17.31 % lower odds of being reluctant to stop using a favourite streaming platform that does not use energy-efficient data centres. These odds are 2.40 % lower for respondents who use ChatGPT sometimes and 5.54 % for respondents who do not currently use ChatGPT. Thus, the low frequency of ChatGPT use demonstrates a higher tendency for the respondents to leave their favourite streaming platform that does not use an energy-efficient data centre.

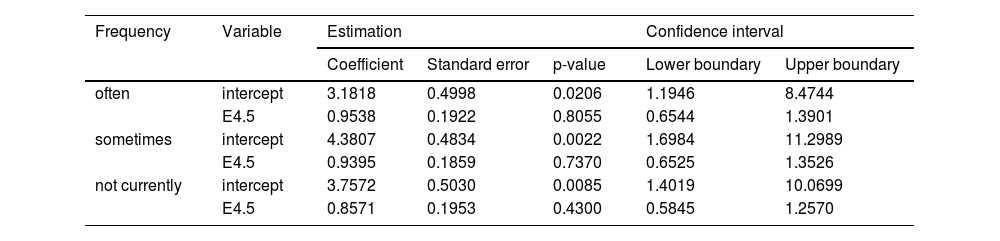

The fifth question, which addresses the situation in which one stops using a favourite AI system that does not use an energy-efficient data centre, is revealed in Table 9.

The frequency of ChatGPT use to stopping the use of a favourite AI system that does not use energy-efficient data centres regression model.

| Frequency | Variable | Estimation | Confidence interval | |||

|---|---|---|---|---|---|---|

| Coefficient | Standard error | p-value | Lower boundary | Upper boundary | ||

| often | intercept | 3.1818 | 0.4998 | 0.0206 | 1.1946 | 8.4744 |

| E4.5 | 0.9538 | 0.1922 | 0.8055 | 0.6544 | 1.3901 | |

| sometimes | intercept | 4.3807 | 0.4834 | 0.0022 | 1.6984 | 11.2989 |

| E4.5 | 0.9395 | 0.1859 | 0.7370 | 0.6525 | 1.3526 | |

| not currently | intercept | 3.7572 | 0.5030 | 0.0085 | 1.4019 | 10.0699 |

| E4.5 | 0.8571 | 0.1953 | 0.4300 | 0.5845 | 1.2570 | |

Source: Own elaboration by the authors.

Table 9 demonstrates the relationship between the frequency of ChatGPT use and stopping the use of a favourite GenAI system that does not use energy-efficient data centres. Altogether, it illustrates a direct dependence, denoting that the lower the frequency of ChatGPT use, the lower the odds of being reluctant to stop using a favourite GenAI system that does not use an energy-efficient data centre. Respondents who use ChatGPT often are assigned 4.62 % lower odds of being reluctant to stop using a favourite GenAI system that does not use energy-efficient data centres. These odds are 6.05 % lower for respondents who use ChatGPT sometimes and 14.39 % lower for respondents who do not currently use ChatGPT. Thus, the low frequency of ChatGPT use demonstrates a higher tendency for the respondents to leave a favourite streaming platform that does not use an energy-efficient data centre. The low frequency of ChatGPT use indicates a higher willingness of the respondents to stop using a favourite GenAI system that does not use an energy-efficient data centre.

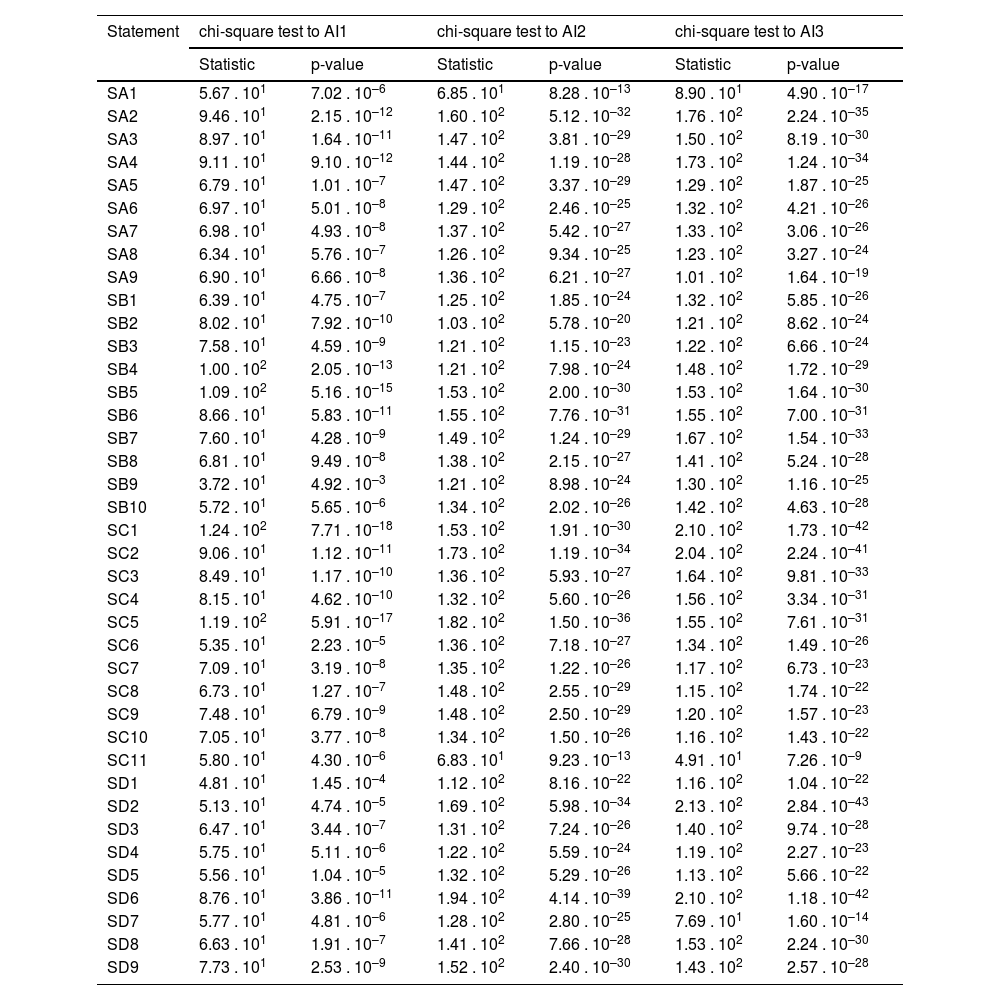

Behavioural changeThe RQ 3 investigates the relationship between AI literacy and declared behavioural change owing to the environmental demand of GenAI. It includes four statements expressing various attitudes of the respondents.

Firstly, behavioural change owing to the environmental demand of GenAI is illustrated in Table 10.

Testing of behavioural change owing to the environmental demand of GenAI.

| Statement | chi-square test to AI1 | chi-square test to AI2 | chi-square test to AI3 | |||

|---|---|---|---|---|---|---|

| Statistic | p-value | Statistic | p-value | Statistic | p-value | |

| SA1 | 5.67 . 101 | 7.02 . 10–6 | 6.85 . 101 | 8.28 . 10–13 | 8.90 . 101 | 4.90 . 10–17 |

| SA2 | 9.46 . 101 | 2.15 . 10–12 | 1.60 . 102 | 5.12 . 10–32 | 1.76 . 102 | 2.24 . 10–35 |

| SA3 | 8.97 . 101 | 1.64 . 10–11 | 1.47 . 102 | 3.81 . 10–29 | 1.50 . 102 | 8.19 . 10–30 |

| SA4 | 9.11 . 101 | 9.10 . 10–12 | 1.44 . 102 | 1.19 . 10–28 | 1.73 . 102 | 1.24 . 10–34 |

| SA5 | 6.79 . 101 | 1.01 . 10–7 | 1.47 . 102 | 3.37 . 10–29 | 1.29 . 102 | 1.87 . 10–25 |

| SA6 | 6.97 . 101 | 5.01 . 10–8 | 1.29 . 102 | 2.46 . 10–25 | 1.32 . 102 | 4.21 . 10–26 |

| SA7 | 6.98 . 101 | 4.93 . 10–8 | 1.37 . 102 | 5.42 . 10–27 | 1.33 . 102 | 3.06 . 10–26 |

| SA8 | 6.34 . 101 | 5.76 . 10–7 | 1.26 . 102 | 9.34 . 10–25 | 1.23 . 102 | 3.27 . 10–24 |

| SA9 | 6.90 . 101 | 6.66 . 10–8 | 1.36 . 102 | 6.21 . 10–27 | 1.01 . 102 | 1.64 . 10–19 |

| SB1 | 6.39 . 101 | 4.75 . 10–7 | 1.25 . 102 | 1.85 . 10–24 | 1.32 . 102 | 5.85 . 10–26 |

| SB2 | 8.02 . 101 | 7.92 . 10–10 | 1.03 . 102 | 5.78 . 10–20 | 1.21 . 102 | 8.62 . 10–24 |

| SB3 | 7.58 . 101 | 4.59 . 10–9 | 1.21 . 102 | 1.15 . 10–23 | 1.22 . 102 | 6.66 . 10–24 |

| SB4 | 1.00 . 102 | 2.05 . 10–13 | 1.21 . 102 | 7.98 . 10–24 | 1.48 . 102 | 1.72 . 10–29 |

| SB5 | 1.09 . 102 | 5.16 . 10–15 | 1.53 . 102 | 2.00 . 10–30 | 1.53 . 102 | 1.64 . 10–30 |

| SB6 | 8.66 . 101 | 5.83 . 10–11 | 1.55 . 102 | 7.76 . 10–31 | 1.55 . 102 | 7.00 . 10–31 |

| SB7 | 7.60 . 101 | 4.28 . 10–9 | 1.49 . 102 | 1.24 . 10–29 | 1.67 . 102 | 1.54 . 10–33 |

| SB8 | 6.81 . 101 | 9.49 . 10–8 | 1.38 . 102 | 2.15 . 10–27 | 1.41 . 102 | 5.24 . 10–28 |

| SB9 | 3.72 . 101 | 4.92 . 10–3 | 1.21 . 102 | 8.98 . 10–24 | 1.30 . 102 | 1.16 . 10–25 |

| SB10 | 5.72 . 101 | 5.65 . 10–6 | 1.34 . 102 | 2.02 . 10–26 | 1.42 . 102 | 4.63 . 10–28 |

| SC1 | 1.24 . 102 | 7.71 . 10–18 | 1.53 . 102 | 1.91 . 10–30 | 2.10 . 102 | 1.73 . 10–42 |

| SC2 | 9.06 . 101 | 1.12 . 10–11 | 1.73 . 102 | 1.19 . 10–34 | 2.04 . 102 | 2.24 . 10–41 |

| SC3 | 8.49 . 101 | 1.17 . 10–10 | 1.36 . 102 | 5.93 . 10–27 | 1.64 . 102 | 9.81 . 10–33 |

| SC4 | 8.15 . 101 | 4.62 . 10–10 | 1.32 . 102 | 5.60 . 10–26 | 1.56 . 102 | 3.34 . 10–31 |

| SC5 | 1.19 . 102 | 5.91 . 10–17 | 1.82 . 102 | 1.50 . 10–36 | 1.55 . 102 | 7.61 . 10–31 |

| SC6 | 5.35 . 101 | 2.23 . 10–5 | 1.36 . 102 | 7.18 . 10–27 | 1.34 . 102 | 1.49 . 10–26 |

| SC7 | 7.09 . 101 | 3.19 . 10–8 | 1.35 . 102 | 1.22 . 10–26 | 1.17 . 102 | 6.73 . 10–23 |

| SC8 | 6.73 . 101 | 1.27 . 10–7 | 1.48 . 102 | 2.55 . 10–29 | 1.15 . 102 | 1.74 . 10–22 |

| SC9 | 7.48 . 101 | 6.79 . 10–9 | 1.48 . 102 | 2.50 . 10–29 | 1.20 . 102 | 1.57 . 10–23 |

| SC10 | 7.05 . 101 | 3.77 . 10–8 | 1.34 . 102 | 1.50 . 10–26 | 1.16 . 102 | 1.43 . 10–22 |

| SC11 | 5.80 . 101 | 4.30 . 10–6 | 6.83 . 101 | 9.23 . 10–13 | 4.91 . 101 | 7.26 . 10–9 |

| SD1 | 4.81 . 101 | 1.45 . 10–4 | 1.12 . 102 | 8.16 . 10–22 | 1.16 . 102 | 1.04 . 10–22 |

| SD2 | 5.13 . 101 | 4.74 . 10–5 | 1.69 . 102 | 5.98 . 10–34 | 2.13 . 102 | 2.84 . 10–43 |

| SD3 | 6.47 . 101 | 3.44 . 10–7 | 1.31 . 102 | 7.24 . 10–26 | 1.40 . 102 | 9.74 . 10–28 |

| SD4 | 5.75 . 101 | 5.11 . 10–6 | 1.22 . 102 | 5.59 . 10–24 | 1.19 . 102 | 2.27 . 10–23 |

| SD5 | 5.56 . 101 | 1.04 . 10–5 | 1.32 . 102 | 5.29 . 10–26 | 1.13 . 102 | 5.66 . 10–22 |

| SD6 | 8.76 . 101 | 3.86 . 10–11 | 1.94 . 102 | 4.14 . 10–39 | 2.10 . 102 | 1.18 . 10–42 |

| SD7 | 5.77 . 101 | 4.81 . 10–6 | 1.28 . 102 | 2.80 . 10–25 | 7.69 . 101 | 1.60 . 10–14 |

| SD8 | 6.63 . 101 | 1.91 . 10–7 | 1.41 . 102 | 7.66 . 10–28 | 1.53 . 102 | 2.24 . 10–30 |

| SD9 | 7.73 . 101 | 2.53 . 10–9 | 1.52 . 102 | 2.40 . 10–30 | 1.43 . 102 | 2.57 . 10–28 |

Source: Own elaboration by the authors.

Behavioural change as shown in Table 10 is interpreted in a homogeneous way. All statements’ relationships to the general question related to the frequency of ChatGPT use and use of Gemini and Microsoft Copilot align with random distribution of probability. This points to homogeneity of the respondents’ responses in this field and opens up space for further investigation.

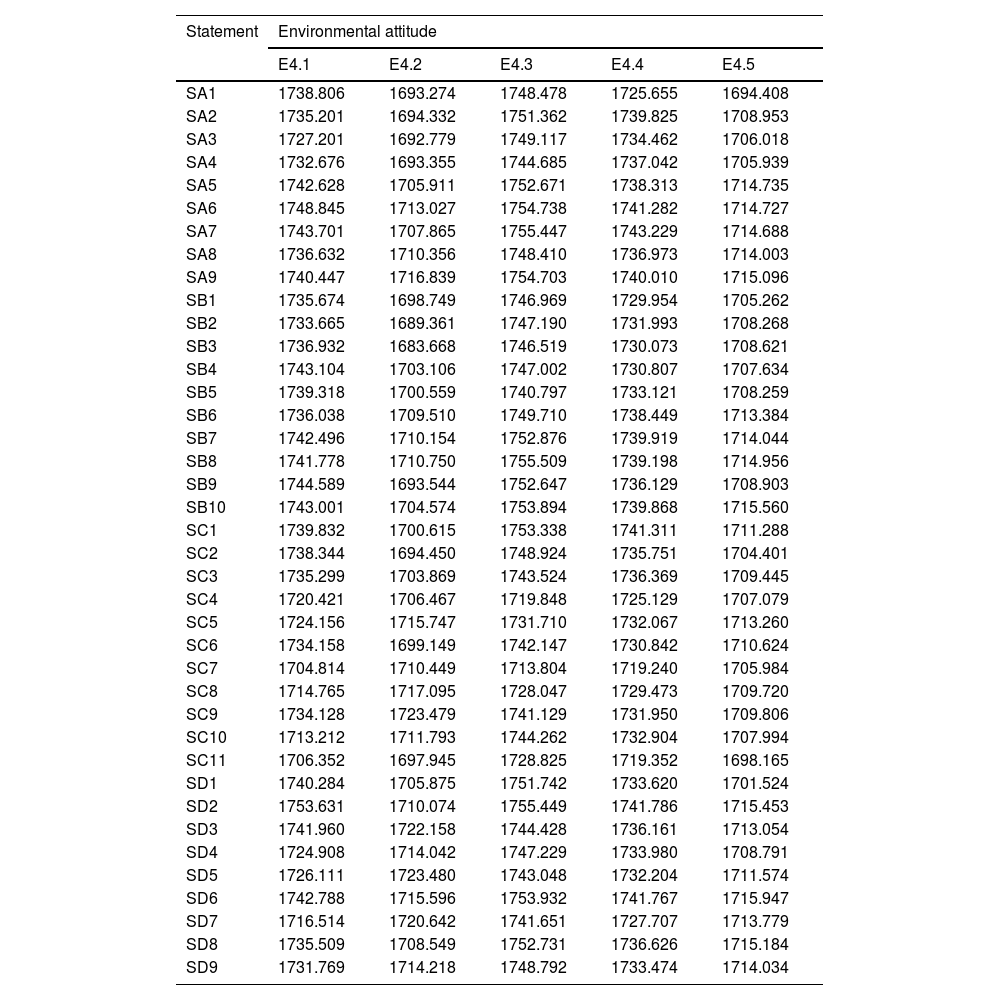

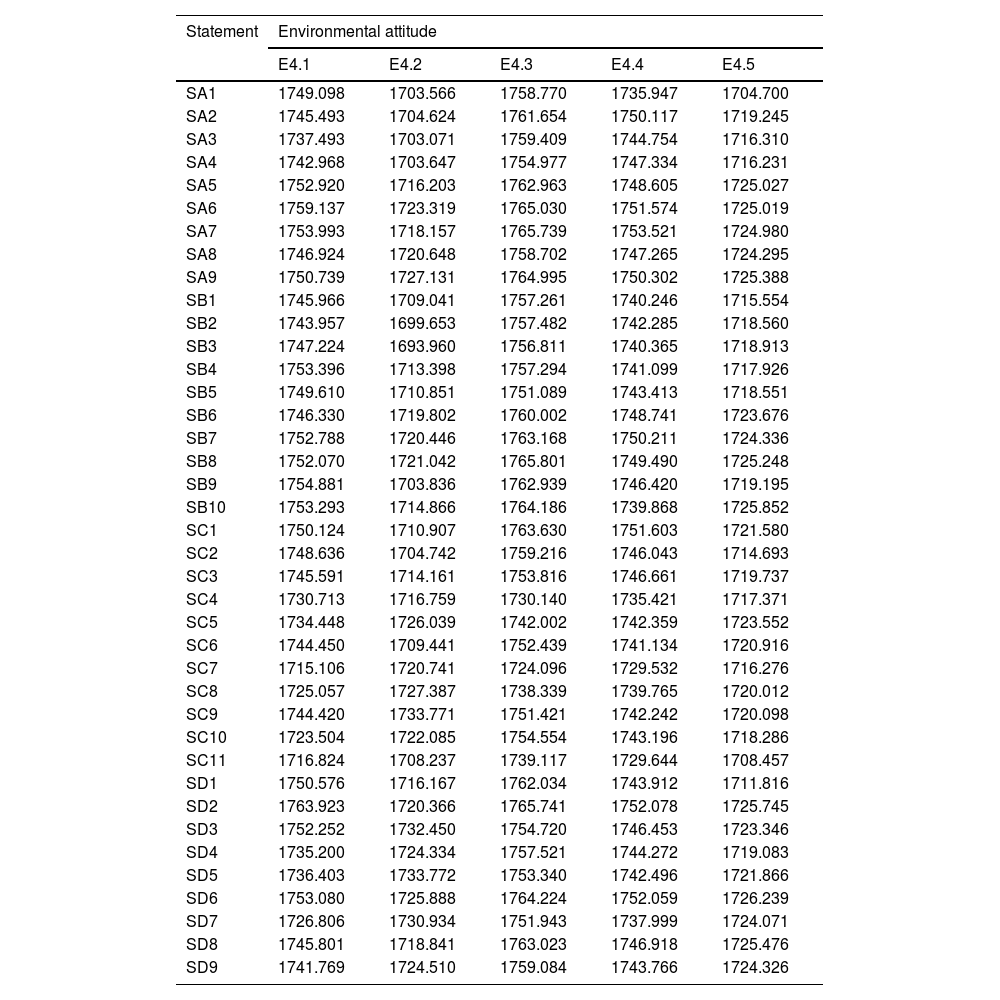

The following subsections are devoted to the particular examined fields of the questioned statements –internal personal motivation and self-confidence in learning, interest in career and self-confidence when using AI, behavioural aspects, and cognitive aspects and evaluation.

Internal personal motivation and self-confidence in learningAs seen in Table 15 in the appendix, the respondents’ environmental attitudes towards internal personal motivation and self-confidence in learning show considerable differences. The first group of attitudes is associated with the possibility of changing one's own email address or going to the email service provider that uses more energy-efficient and water-cooled data centres. Respondents who agree with the usefulness of learning about AI in personal life, study and work, have 16.75 % higher odds of being reluctant to change email address to another provider. These odds are 17.47 % higher for respondents who enjoy education in the field of AI and 20.42 % higher for those who think that self-education in the field of AI enriches their life. Respondents who are interested in discovering new AI technologies have 17.95 % higher odds. These odds are 15.91 % higher for respondents who believe that they can perform tasks related to AI. Those who are sure to be able to conduct projects related to AI have 13.22 % higher odds. Respondents who believe that they can gain knowledge and skills associated with AI are more reluctant by 15.72 %. Respondents who are sure that they will achieve good results in AI tests have 18.74 % higher odds of being reluctant to achieve good results in AI tests; these odds are 17.05 % higher for respondents who are convinced that they understand AI.

The second group of attitudes represents the possibility of transferring one's own data to a provider that uses more energy-efficient and water-cooled data centres. Respondents who agree with the usefulness of learning about AI in personal life, study and work have 24.52 % higher odds of being reluctant to transfer their own data to another provider. Additionally, respondents who enjoy education in the field of AI are assigned 23.50 % higher odds. Those who think that self-education in the field of AI enriches their life are more reluctant by 24.32 %, and those who are interested in discovering new AI technologies by 23.28 %. These odds are 21.56 % higher for respondents who believe that they can perform tasks related to AI. The ones who are sure to be able to conduct projects related to AI have 19.22 % higher odds, and those who believe to be able to gain knowledge and skills associated with AI are more reluctant by 21.25 %. Respondents, who are sure that will achieve good results in AI tests have 20.44 % higher odds of being reluctant to achieve good results in AI tests. Respondents who are convinced that they understand AI have 17.77 % higher odds.

The third group of attitudes demonstrates the possibility of leaving a favourite social network that does not use energy-efficient and water-saving opportunities in its data centre. Respondents who agree with usefulness of learning about AI in personal life, study and work have 11.62 % higher odds of being reluctant to transfer own data to another provider. Furthermore, respondents who enjoy education in the field of AI have odds higher than 9.78 %. Those who think that self-education in the field of AI enriches their life are more reluctant by 11.10 %, and those who are interested in discovering new AI technologies by 12.74 %. These odds are 9.74 % higher for respondents who believe that they can perform tasks related to AI. Those who are sure to be able to conduct projects related to AI have 8.46 % higher odds. Respondents who believe to be able to gain knowledge and skills associated with AI are more reluctant by 7.97 %. Respondents, who are sure that will achieve good results in AI tests have 12.44 % higher odds of being reluctant to achieve good results in AI tests; those who are convinced that they understand AI have 8.50 % higher odds.

The fourth group of attitudes shows the possibility of stopping the use of a favourite streaming platform that does not use energy-efficient and water-saving opportunities in its data centres. Respondents who agree with the usefulness of learning about AI in personal life, study and work have 14.93 % higher odds of being reluctant to stop using a favourite streaming platform that does not use energy-efficient and water-saving opportunities in its data centres. Moreover, respondents who enjoy education in the field of AI have 7.32 % higher odds. The ones who think that self-education in the field of AI enriches their life are more reluctant by 10.67 %. Those who are interested in discovering new AI technologies have 8.93 % higher odds. Respondents who believe that they can perform tasks related to AI have 8.96 % higher odds. Those who are sure to be able to conduct projects related to AI have 6.77 % higher odds. Respondents who believe that they can gain knowledge and skills associated with AI are more reluctant by 4.77 %. Respondents who are sure that will achieve good results in AI tests have 10.06 % higher odds of being reluctant to achieve good results in AI tests; those who are convinced that they understand AI have 7.85 % higher odds.

The fifth group of attitudes shows the possibility of stopping the use of a favourite GenAI platform that does not use energy-efficient and water-saving opportunities in its data centres. Respondents who agree with the usefulness of learning about AI in personal life, study and work have 16.65 % higher odds of being reluctant to stop using a favourite streaming platform that does not use energy-efficient and water-saving opportunities in its data centres. Moreover, respondents who enjoy education in the field of AI have 9.68 % higher odds. Those who think that self-education in the field of AI enriches their life are more reluctant by 11.40 %. Those who are interested in discovering new AI technologies have 11.06 % higher odds. These odds are 6.08 % higher for respondents who believe that they can perform tasks related to AI. Those who think that they can conduct projects related to AI have 6.16 % higher odds. Respondents who believe that they can gain knowledge and skills associated with AI are more reluctant by 6.23 %. Respondents who are sure that will achieve good results in AI tests have 6.91 % higher odds of being reluctant to achieve good results in AI tests; those who are convinced that they understand AI have 5.80 % higher odds.